The recent military operation conducted by the US in Iran featured the deployment of Anthropic”s Claude AI, shortly after President Trump mandated federal agencies to halt the use of this technology. The strikes, which occurred on a Saturday, marked a significant and controversial moment in the intersection of artificial intelligence and military strategy.

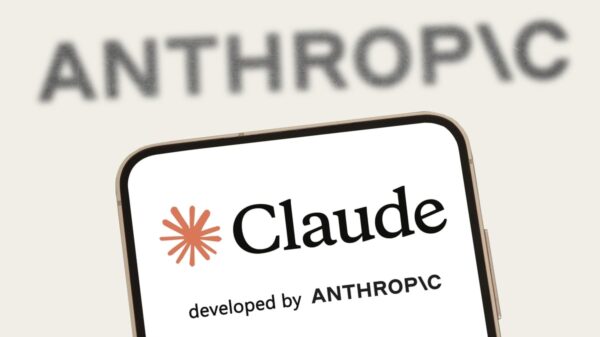

According to sources, US Central Command utilized Claude for crucial tasks such as intelligence assessments, target identification, and simulating battle scenarios. This operation was executed in collaboration with Israel and resulted in the death of Iran”s Supreme Leader, Ayatollah Ali Khamenei, a development that has led to a declared mourning period in Iran.

On the day prior to the airstrikes, Trump had issued a directive for all federal entities to “immediately cease” utilizing Anthropic”s tools, labeling the organization as “leftwing nut jobs” and expressing concerns over the potential risks to “American lives.” The Pentagon responded by designating Anthropic as a “supply chain risk,” initiating a plan to phase out its systems over six months.

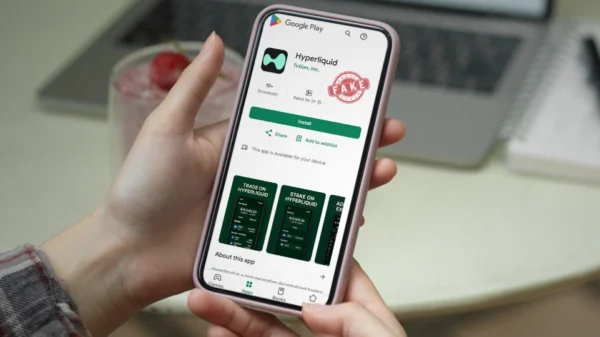

The tension between Anthropic and the Pentagon had been escalating for months, particularly as the military sought unrestricted access to Claude for any lawful military purpose. However, Anthropic firmly rejected these demands, emphasizing its commitment to ethical guidelines that prevent domestic surveillance and the use of AI in autonomous weaponry.

In a statement, Anthropic affirmed that “no amount of intimidation or punishment” from the Department of War would alter its stance on these ethical concerns. Prior to the airstrikes, Claude had already been integrated into military operations through collaborations with companies such as Palantir and Amazon Web Services, and it had played a role in earlier operations, including the capture of Venezuelan President Nicolás Maduro.

In a striking development, shortly after the Pentagon severed ties with Anthropic, OpenAI announced a new agreement to provide its AI models for use within the classified networks of the Department of Defense. OpenAI”s CEO, Sam Altman, highlighted that this partnership aligns with their ethical principles, which include prohibitions against mass surveillance and the necessity for human oversight in weaponized AI applications.

Despite the ongoing rivalry, both Anthropic and OpenAI are gearing up for potential initial public offerings, with significant funding rounds recently completed. OpenAI”s valuation has reportedly soared to $730 billion, while Anthropic secured $30 billion in funding earlier this year.

As the military”s reliance on AI technology deepens, experts predict that it will take several months to effectively replace Claude across all military systems, given its extensive integration with existing partners. The implications of these developments will continue to unfold as debates on the ethical use of AI in warfare intensify.