Anthropic has raised serious allegations against three Chinese artificial intelligence firms, accusing them of using fraudulent accounts to extract data from its AI system, Claude. The companies identified in the claims are DeepSeek, Moonshot AI, and MiniMax, which reportedly created over 24,000 fake accounts to access the system.

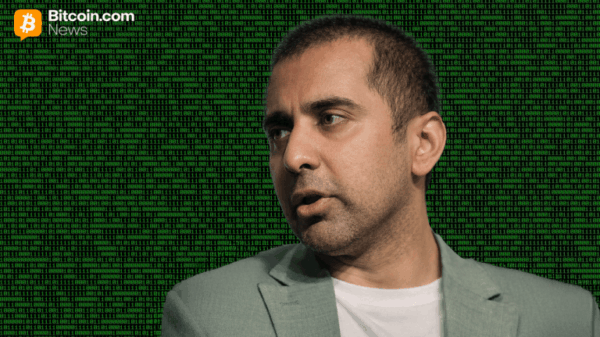

According to Anthropic, these firms sent more than 16 million prompts to Claude, gathering responses and patterns intended for re-training their own models. The activities, which Anthropic describes as a form of “distillation,” allow companies to leverage outputs from one AI model to enhance others. Dario Amodei, who leads Anthropic, highlighted the scale of this operation as indicative of a deliberate strategy to rapidly enhance their AI capabilities.

Specifically, the data extraction attempts included DeepSeek”s 150,000 interactions with Claude, while Moonshot AI recorded over 3.4 million prompts, and MiniMax surpassed 13 million. Anthropic emphasizes that such extensive solicitation of data reflects a clear intention to derive value quickly from its systems.

This incident marks a continuation of concerns regarding DeepSeek, as OpenAI had previously accused the firm of employing similar tactics to replicate its systems. In a recent communication to lawmakers, OpenAI claimed that DeepSeek was attempting to imitate its products through massive prompt volumes.

While Anthropic acknowledges that distillation can serve legitimate purposes, such as facilitating the development of smaller models, it cautions that the same techniques can be weaponized to create competitive systems much faster and at a lower cost. The growing reliance on synthetic data in the training of large foundation models has become a pivotal aspect of AI development, especially as high-quality real data remains scarce.

The implications of these actions extend beyond corporate competition, raising national security concerns. Anthropic warns that foreign entities distilling American AI models might subsequently integrate these capabilities into military and surveillance systems.

In response to these threats, Anthropic recently launched a new security tool designed for Claude, which scans for vulnerabilities in software code and proposes solutions. The company plans to host an enterprise briefing shortly, where further product announcements are expected.

The market reacted swiftly to these developments, with cybersecurity stocks experiencing declines. Notable companies like CrowdStrike and Zscaler saw their shares drop around 9 percent, while others, including Netskope and SailPoint, faced similar downturns. The broader implications of new AI tools are shaking the foundations of traditional security services, as established software companies like Salesforce and ServiceNow have also reported significant losses this year.